Edition 39

Early-Stage AI for Appium Test Automation

Perhaps the most buzzy of the buzzwords in tech these days is "AI" (Artificial Intelligence), or "AI/ML" (throwing in Machine Learning). To most of us, these phrases seem like magical fairy dust that promises to make the hard parts of our tech jobs go away. To be sure, AI is (in my opinion) largely over-hyped, or at least its methods and applications are largely misunderstood and therefore assumed to be much more magical than they are.

It might seem surprising then that this Appium Pro edition is all about how you can use AI with Appium! It's a bit surprising to me too, but by working with the folks at Test.ai, the Appium project has developed an AI-powered element finding plugin for use specifically with Appium. What could this possibly be about?

First, let's discuss what I mean by "element finding plugin". In a recent addition to Appium, we added the ability for third-party developers to create "plugins" for Appium that can use an Appium driver together with their own unique capabilities to find elements. As we'll see below, users can access these plugins simply by installing the plugin as an NPM module in their Appium directory, and then using the customFindModules capability to register the plugin with the Appium server. (Go ahead and check out the element finding plugin docs if you want a fuller scoop).

The first plugin we worked on within this new structure was one that incorporates a machine learning model from Test.ai designed to classify app icons, the training data for which was just open-sourced. This is a model which can tell us, given the input of an icon, what sort of thing the icon represents (for example, a shopping cart button, or a back arrow button). The application we developed with this model was the Appium Classifier Plugin, which conforms to the new element finding plugin format.

Basically, we can use this plugin to find icons on the screen based on their appearance, rather than knowing anything about the structure of our app or needing to ask developers for internal identifiers to use as selectors. For the time being the plugin is limited to finding elements by their visual appearance, so it really only works for elements which display a single icon. Luckily, these kinds of elements are pretty common in mobile apps.

This approach is more flexible than existing locator strategies (like accessibility id, or image) in many cases, because the AI model is trained to recognize icons without needing any context, and without requiring them to match only one precise image style. What this means is that using the plugin to find a "cart" icon will work across apps and across platforms, without needing to worry about minor differences.

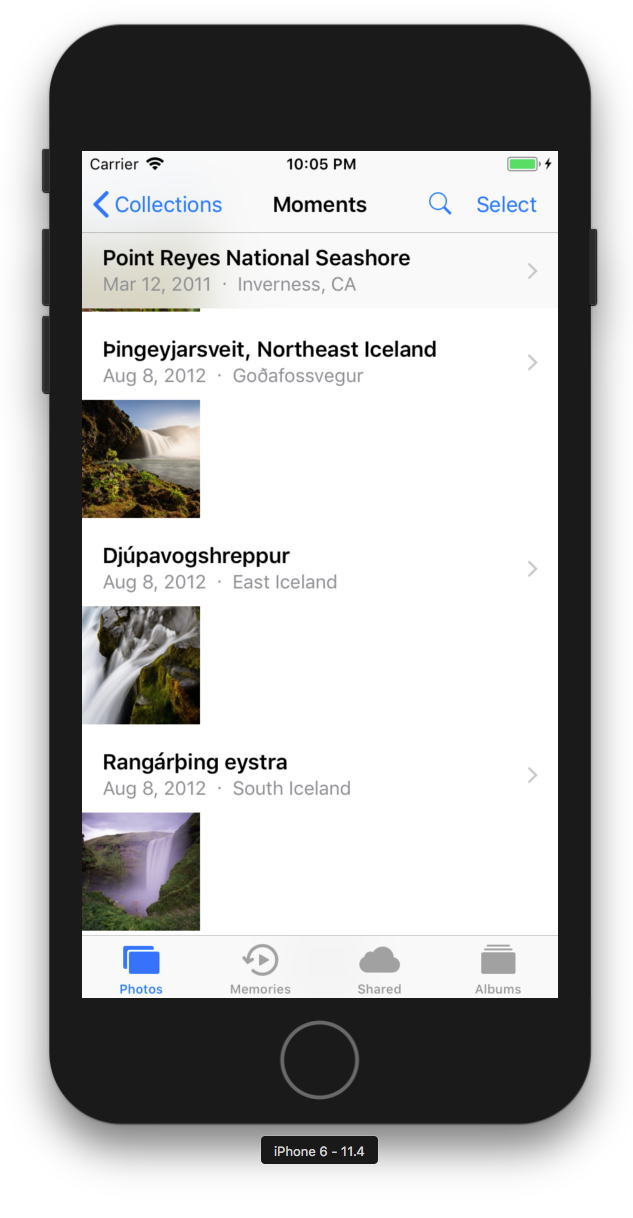

So let's take a look at a concrete example, demonstrating the simplest possible use case. If you fire up an iOS simulator you have access to the Photos application, which looks something like this:

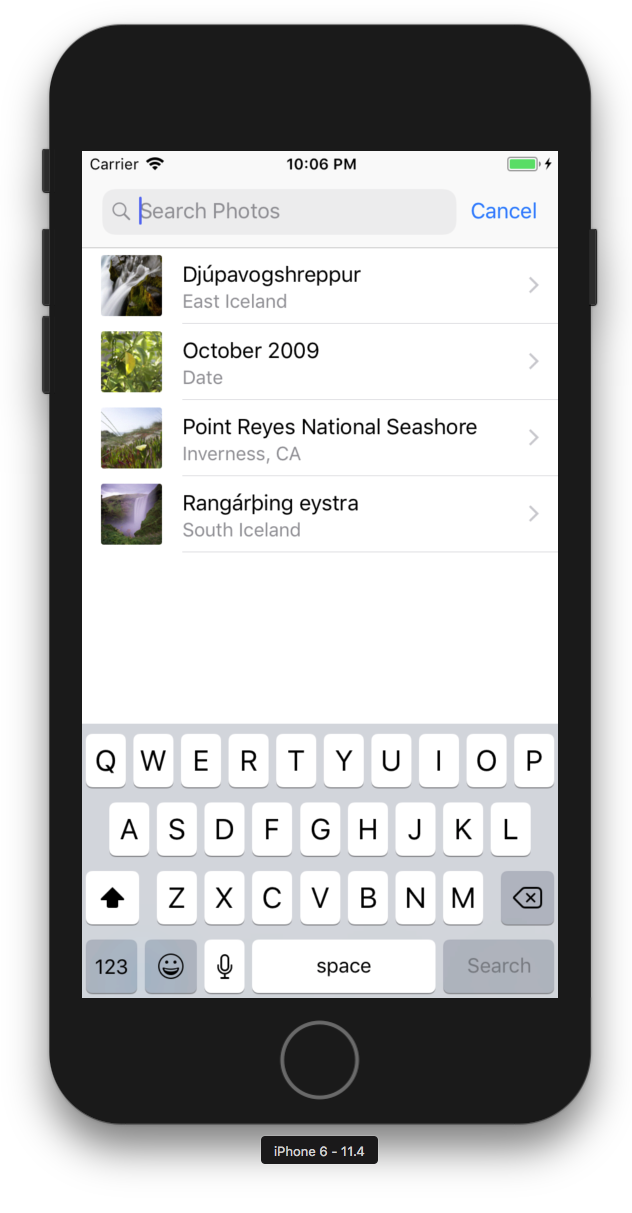

Notice the little magnifying glass icon near the top which, when clicked, opens up a search bar:

Let's write a test that uses the new plugin to find and click that icon. First, we need to follow the setup instructions from the plugin's README, to make sure everything will work. Then, we can set up our Desired Capabilities for running a test against the Photos app:

DesiredCapabilities caps = new DesiredCapabilities();

caps.setCapability("platformName", "iOS");

caps.setCapability("platformVersion", "11.4");

caps.setCapability("deviceName", "iPhone 6");

caps.setCapability("bundleId", "com.apple.mobileslideshow");

Now we need to add some new capabilities: customFindModules (to tell Appium about the AI plugin we want to use), and shouldUseCompactResponses (because the plugin itself told us we need to set this capability in its setup instructions):

HashMap<String, String> customFindModules = new HashMap<>();

customFindModules.put("ai", "test-ai-classifier");

caps.setCapability("customFindModules", customFindModules);

caps.setCapability("shouldUseCompactResponses", false);

You can see that customFindModules is a capability which has some internal structure: in this case "ai" is the shortcut name for the plugin that we can use internally in our test, and "test-ai-classifier" is the fully-qualified reference that Appium will need to be able to find and require the plugin when we request elements with it.

Once we've done all this, finding the element is super simple:

driver.findElement(MobileBy.custom("ai:search"));

Here we're using a new custom locator strategy so that Appium knows we want a plugin, not one of its supported locator strategies. Then, we're prefixing our selector with ai: to let Appium know which plugin specifically we want to use for this request (because there could be multiple). Of course since we are in fact only using one plugin for this test, we could do away with the prefix (and for good measure we could use the different find command style, too):

driver.findElementByCustom("search");

And that's it! As I mentioned above, this technology has some significant limitations at the current time, for example that it can really only reliably find elements which are one of the icons that the model has been trained to detect. On top of that, the process is fairly slow, both in the plugin code (since it has to retrieve every element on screen in order to send information into the model), and in the model itself. All of these areas will see improvement in the future, however. And even if this particular plugin isn't useful for your day-to-day, it demonstrates that concrete applications of AI in the testing space are not only possible, but actual!

As always, you can check out a full (hopefully) working example at Appium Pro's GitHub repo. Happy (locator-angst-free) testing!